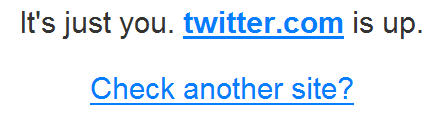

Here’s a useful tool for when you try to visit a favorite web site and discover you can’t. As the name implies, DownForEveryoneOrJustMe.com tells you if your inability to browse a “dark” site is a local, regional or universal problem. If the first part of 2008 is any indication, expect to use it more and more.

Surging web traffic is placing bigger demands on sites and servers. So it stands to reason that more frequent outages are inevitable.

At least now you can get a better idea of its origins — whether they are a network firewall blocking your access, at regional internet outage, or a full-out site failure.

And one of the sites this tool gets used for?

The notoriously buggy Twitter, of course. According to a New York Times piece on the frustrations of unexpectedly dark sites, “Twitter was down for 37 hours this year through April — by far more than any other major social networking Web site.”

But take heart. Umang Gupta is quoted in the article with some reassurances: “There are millions of Web sites and billions of Web pages around the world … These big high-visibility problems are actually very rare.” I can add to Gupta’s comments that sites managed by groups such as mine are also doing extremely well, in terms of near zero downtime. We are currently using some of the most reliable network connections and web servers out there, so our down times have been almost nonexistent.

Coincidental to his mention in the NY Times piece, my team actually uses Gupta’s services. We use them specifically to catch and minimize these types of problems with a site. He is chief executive of Keynote Systems, which monitors the web performance of client web sites.

Quietly at work 24/7 on all of our sites, Keynote’s Red Alert monitoring service lets us know immediately if any are faltering — often before a problem becomes apparent to users. It’s all an effort to ensure that sites such as DownForEveryoneOrJustMe.com don’t wind up fielding many questions about our sites!